I recently performed an NetApp FAS storage aggregate expansion. The aggregate to be expanded is configured with Advanced Drive Partitioning (ADP) feature, and each physical disk is sliced into Root-Data-Data partitions.

For who do not familiar with NetApp ADP feature, here is the link for more information.

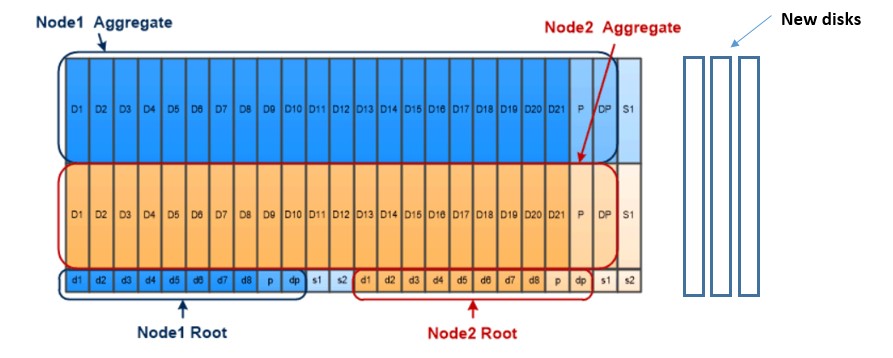

My environment is a standard environment with three new disks to be add into existing two aggregates as below:

- Two existing aggregate aggr-a (Node1) and aggr-b (Node2);

- Aggr-a is composed by disk partition “data1” in each physical disk; and

- Aggr-b is composed by disk partition “data2” in each physical disk.

The procedure to expand the ADP based aggregate is different from expanding the standard aggregate, the key part is about “When” and “How” ONTAP will partition the disk and put the right partition into the right aggregate. I listed my procedure as below which is validated in ONTAP version 9.6 SP3.

* Pls noted that, aggregate expansion cannot be stopped or reversed once started, please consult NetApp support if not familiar with NetApp operations. This post is for reference only.

- Disable the disk auto assignment with below command:

storage disk option modify ?autoassign off

- Physically add three new disks into the existing disk shelf or with new shelf. After adding the disk, the disk should be in “unassigned” status.

- Check the existing disk partition ownership. The disk partition “data1” of the existing disks should be owned by node1. The disk partition “data2” of the existing disks should be owned by node2.

> Storage disk show -partition-ownership Disk Partition Home Owner Home ID Owner ID -------- --------- ----------------- ----------------- ----------- ----------- 1.1.0 Container node1 node1 xxxxxxxxx xxxxxxxxx Root node1 node1 xxxxxxxxx xxxxxxxxx Data1 node1 node1 xxxxxxxxx xxxxxxxxx Data2 node2 node2 xxxxxxxxx xxxxxxxxx ……

- Manually assign all three new disks to node1. Assigning all new disks into same node is important for the next step. After disk assignment, the three new disk should be shown as “spare” disks for now.

storage disk assign -disk 1.10.0 -owner node1 storage disk assign -disk 1.10.1 -owner node1 storage disk assign -disk 1.10.2 -owner node1 storage disk show –ownership #Check the status of disk ownership. storage aggregate show-spare-disks #Check the new disks are shown as “spare”

- Check the current aggregate structure to make a decision on which PLEX and RAID group you would like the new disks to be expanded.

storage aggregate show-status -aggregate aggr-a storage aggregate show-status -aggregate aggr-b

- The disks should be ready to be added into aggregate. For ADP enable the aggregate, in my case, expand the aggr-a (Node1) First. Use below command to simulate (“-simulate true”) the aggregate expansion. This “SIMULATION” step is important as the aggregate expansion cannot be stopped or reversed once started. The command to simulate the expansion for the ADP enable aggregate is as below:

> storage aggregate add-disks -aggregate aggr-a -diskcount 3 -simulate true

Disks would be added to aggregate "aggr-a" on node "node1" in the following manner:

First Plex

RAID Group rg0, 3 disks (block checksum, raid_dp)

Usable Physical

Position Disk Type Size Size

---------- ------------------------- ---------- -------- --------

shared 1.10.0 SSD 1.73TB 1.73TB

shared 1.10.1 SSD 1.73TB 1.73TB

shared 1.10.2 SSD 1.73TB 1.73TB

Aggregate capacity available for volume use would be increased by 4.67TB.

The following disks would be partitioned: 1.10.0,1.10.1,1.10.2

Pls check carefully for below items in simulation result.

-

- Plex# to be added;

- RAID Group# to be added; and

- Confirm the disks which will be partitioned are the desired disks.

It is possible to use “-raidgroup” switch to specify which RAID group to expand. Also consider to modify RAID Group size if need to expand the existing RAID group.

- Once the SIMULATION is complete, removing the “-simulate true” in previous command and expand the aggregate. Type “y” to confirm the expansion. The expansion should finish in a few seconds.

storage aggregate add-disks -aggregate aggr-a -diskcount 3

- For now, the aggr-a should have been expanded. Check the total capacity for aggr-a to confirm. Also need to check the disk partitions for the newly added disks. These disks should be partitioned in last step with Root-Data1-Data2, same as existing disks. Confirm disk partition “data2” is owned by node2 now.

>Storage disk show -partition-ownership Disk Partition Home Owner Home ID Owner ID -------- --------- ----------------- ----------------- ----------- ----------- 1.10.0 Container node1 node1 xxxxxxxxx xxxxxxxxx Root node1 node1 xxxxxxxxx xxxxxxxxx Data1 node1 node1 xxxxxxxxx xxxxxxxxx Data2 node2 node2 xxxxxxxxx xxxxxxxxx ……

- Repeat above step 5 and 6 to expand the aggr-b. Since the newly added disks are already partitioned and partition “data2” is automatically configured as owned by node2, there is no need to partition any more.

storage aggregate add-disks -aggregate aggr-b -disklist 1.10.0,1.10.1,1.10.2 -simulate true storage aggregate add-disks -aggregate aggr-b -disklist 1.10.0,1.10.1,1.10.2

- Now both aggregates on node1 and node2 are expanded with newly added disks.

You may have question about the root partition in newly added disks. Do they need to be added into root aggregate? My own understating this is not necessary unless root aggregate need more capacity and instructed by NetApp support.

Really appreciate this post, it’s a shame that the Netapp doco can’t be as concise, informative and simple to follow.